Earlier this month the IJG unleashed version 8 of its ubiquitous libjpeg library on the world. Eager to try out the “major breakthrough in image coding technology” promised in the README file accompanying v7, I downloaded the release. A glance at the README file suggests something major indeed is afoot:

Version 8.0 is the first release of a new generation JPEG standard to overcome the limitations of the original JPEG specification.

The text also hints at the existence of a document detailing these marvellous new features, and a Google search later a copy has found its way onto my monitor. As I read, however, my state of mind shifts from an initial excited curiosity, through bewilderment and disbelief, finally arriving at pure merriment.

Already on the first page it becomes clear no new JPEG standard in fact exists. All we have is an unsolicited proposal sent to the ITU-T by members of the IJG. Realising that even the most brilliant of inventions must start off as mere proposals, I carry on reading. The summary informs me that I am about to witness the introduction of three extensions to the T.81 JPEG format:

- An alternative coefficient scan sequence for DCT coefficient serialization

- A SmartScale extension in the Start-Of-Scan (SOS) marker segment

- A Frame Offset definition in or in addition to the Start-Of-Frame (SOF) marker segment

Together these three extensions will, it is promised, “bring DCT based JPEG back to the forefront of state-of-the-art image coding technologies.”

Alternative scan

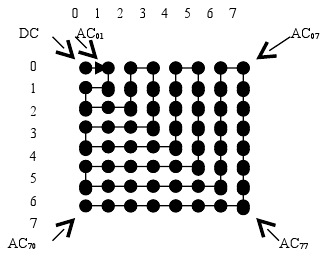

The first of the proposed extensions introduces an alternative DCT coefficient scan sequence to be used in place of the zigzag scan employed in most block transform based codecs.

Alternative scan sequence

The advantage of this scan would be that combined with the existing progressive mode, it simplifies decoding of an initial low-resolution image which is enhanced through subsequent passes. The author of the document calls this scheme “image-pyramid/hierarchical multi-resolution coding.” It is not immediately obvious to me how this constitutes even a small advance in image coding technology.

At this point I am beginning to suspect that our friend from the IJG has been trapped in a half-world between interlaced GIF images transmitted down noisy phone lines and today’s inferno of SVC, MVC, and other buzzwords.

(Not so) SmartScale

Disguised behind this camel-cased moniker we encounter a method which, we are told, will provide better image quality at high compression ratios. The author has combined two well-known (to us) properties in a (to him) clever way.

The first property concerns the perceived impact of different types of distortion in an image. When encoding with JPEG, as the quantiser is increased, the decoded image becomes ever more blocky. At a certain point, a better subjective visual quality can be achieved by down-sampling the image before encoding it, thus allowing a lower quantiser to be used. If the decoded image is scaled back up to the original size, the unpleasant, blocky appearance is replaced with a smooth blur.

The second property belongs to the DCT where, as we all know, the top-left (DC) coefficient is the average of the entire block, its neighbours represent the lowest frequency components etc. A top-left-aligned subset of the coefficient block thus represents a low-resolution version of the full block in the spatial domain.

In his flash of genius, our hero came up with the idea of using the DCT for down-scaling the image. Unfortunately, he appears to possess precious little knowledge of sampling theory and human visual perception. Any block-based resampling will inevitably produce sharp artefacts along the block edges. The human visual system is particularly sensitive to sharp edges, so this is one of the most unwanted types of distortion in an encoded image.

Despite the obvious flaws in this approach, I decided to give it a try. After all, the software is already written, allowing downscaling by factors of 8/8..16.

Using a 1280×720 test image, I encoded it with each of the nine scaling options, from unity to half size, each time adjusting the quality parameter for a final encoded file size of no more than 200000 bytes. The following table presents the encoded file size, the libjpeg quality parameter used, and the SSIM metric for each of the images.

| Scale | Size | Quality | SSIM |

|---|---|---|---|

| 8/8 | 198462 | 59 | 0.940 |

| 8/9 | 196337 | 70 | 0.936 |

| 8/10 | 196133 | 79 | 0.934 |

| 8/11 | 197179 | 84 | 0.927 |

| 8/12 | 193872 | 89 | 0.915 |

| 8/13 | 197153 | 92 | 0.914 |

| 8/14 | 188334 | 94 | 0.899 |

| 8/15 | 198911 | 96 | 0.886 |

| 8/16 | 197190 | 97 | 0.869 |

Although the smaller images allowed a higher quality setting to be used, the SSIM value drops significantly. Numbers may of course be misleading, but the images below speak for themselves. These are cut-outs from the full image, the original on the left, unscaled JPEG-compressed in the middle, and JPEG with 8/16 scaling to the right.

Looking at these images, I do not need to hesitate before picking the JPEG variant I prefer.

Frame offset

The third and final extension proposed is quite simple and also quite pointless: a top-left cropping to be applied to the decoded image. The alleged utility of this feature would be to enable lossless cropping of a JPEG image. In a typical image workflow, however, JPEG is only used for the final published version, so the need for this feature appears quite far-fetched.

The grand finale

Throughout the text, the author makes references to “the fundamental DCT property for image representation.” In his own words:

This property was found by the author during implementation of the new DCT scaling features and is after his belief one of the most important discoveries in digital image coding after releasing the JPEG standard in 1992.

The secret is to be revealed in an annex to the main text. This annex quotes in full a post by the author to the comp.dsp Usenet group in a thread with the subject why DCT. Reading the entire thread proves quite amusing. A few excerpts follow.

The actual reason is much simpler, and therefore apparently very difficult to recognize by complicated-thinking people.

Here is the explanation:

What are people doing when they have a bunch of images and want a quick preview? They use thumbnails! What are thumbnails? Thumbnails are small downscaled versions of the original image! If you want more details of the image, you can zoom in stepwise by enlarging (upscaling) the image.

So with proper understanding of the fundamental DCT property, the MPEG folks could make their videos more scalable, but, as in the case of JPEG, they are unable to recognize this simple but basic property, unfortunately, and pursue rather inferior approaches in actual developments.

These are just phrases, and they don’t explain anything. But this is typical for the current state in this field: The relevant people ignore and deny the true reasons, and thus they turn in a circle and no progress is being made.

However, there are dark forces in action today which ignore and deny any fruitful advances in this field. That is the reason that we didn’t see any progress in JPEG for more than a decade, and as long as those forces dominate, we will see more confusion and less enlightenment. The truth is always simple, and the DCT *is* simple, but this fact is suppressed by established people who don’t want to lose their dubious position.

I believe a trip to the Total Perspective Vortex may be in order. Perhaps his tin-foil hat will save him.

I don’t think this IJG has much to do with the IJG of the 1990s. Tom Lane (the former organizer of the IJG) was not involved in v7 and later releases as far as I know. I think they’re almost solely the work of Guido Vollbeding, who does seem to be a bit kooky.

I think you’ve missed the point of “SmartScale”. As far as I can tell, the idea is to use smaller block sizes than 8×8. You can simulate this in the v7 library by scaling by 8/n on encode, with n less than 8, then scaling by n/8 on decode (you can even simulate it in version 6b with n=1,2,4). “SmartScale” stores the n/8 factor in the file so it can be automatically applied when decoding, kind of like EXIF rotation, and also suppresses the AC coefficients that are always zero. It doesn’t add support for larger DCTs with more than 64 outputs; when you scaled by 8/n with n greater than 8 you simply lost the high-frequency components with predictable results.

There is an ITU-T recommendation, T.851, which adds 16-bit samples and a new arithmetic coding mode to T.81. I had assumed that the promised improvements in v8 would be an implementation of that standard, but apparently not.

The tests I performed used the settings suggested in the manual, downscaling on encode and upscaling on decode.

You guys might be interested in reading this:

http://www.libjpeg-turbo.org/About/SmartScale

It’s sort of an expansion on the research conducted above, but I took into account not just DCT scaling but also the use of smaller block sizes. In general, the reduced block size feature (which is actually what SmartScale is– a means of generating a JPEG file with a block size other than 8×8) is clever, but I was unable to find a case in which it solved a problem that wasn’t already solved by either baseline JPEG or PNG. With regards to DCT scaling, I was able to find a couple of cases in which it produced similar image quality to very low-quality JPEG (basically trading off one type of artifact for another), but it was never better and often worse.

Feedback welcome.

Pingback: JPEG Performance Study | Bruce B Campbell

The frame offset proposal has its use. It would allow for lossless rotation of an image that has dimensions that aren’t an integral multiple of the MCU size, without having to rely on the Exif “soft rotate” tag.

Rotation of digital photographs, particularly on smartphones, forms part of a daily workflow for a significantly large number of people.

While the Exif rotation tag has gained near universal acceptance, there are some widely distributed JPEG decoder implementations that ignore it. The biggest offender is MS-Windows: their photo viewer, including Vista and Windows 8, ignores it.

The only workaround is to re-encode the image to remove (any) top/left edges introduced as a consequence of the rotation. Without a way to specify a frame offset, loss may occur.

The only trouble with this IJG proposal is that it’s too late. Incorporating it into the JPEG standard now won’t solve the lossless rotation problem with decoders that don’t honor the Exif soft rotate tag. It would be wiser to encourage developers of offending decoders to adopt the Exif soft rotation tag instead.

Pingback: 关于JPEG的那点事儿 | おお!ハピネス